The real plots straight from the repo. The interactive demos above are stylized companions; these are the source of truth.

The standard rank-1 recipe for removing refusal — one weight perturbation that has uncensored every other open model since 2023 — barely budges Gemma 4. We chase the missing ingredient through a six-stage mechanistic cascade, and find safety lives in a geometry we did not expect.

When Google first released Gemma 4, I was thinking — maybe this could save lives. I used to hike a lot. We hike in remote areas where there is no phone reception, and you can barely find anybody else. Maybe you meet a person once or twice per hour. If something happens, we are truly on our own.

— story.md · author note, paraphrased for the web

With Gemma 4, this becomes a reality in the palm of your hand. An open-weight model — intelligent enough to triage an emergency — running on a phone at decent speed.

offline · 4B params · 8-bit quant · ~7.5 GB

So I downloaded Gemma 4 E2B IT to my phone, and I asked it the question I'd actually want to ask in the field:

A safety guardrail — exactly the behavior that protects the model in adversarial settings — refuses to engage with a question that might keep someone alive.

Keep refusal where it matters — weapons, abuse, malicious code. Remove it where it harms — medical triage, wilderness survival, home safety. That is the geometric question.

Run a stream of harmful prompts and a stream of harmless prompts through the model. At every layer, take the mean activation of each. The difference between those means is a single vector in the residual stream — the refusal direction.

Rank-1 abliteration is the surgery: project that direction out of the model's output projections (o_proj, down_proj) by subtracting the rank-1 outer product. One direction, removed everywhere. On most models, refusal collapses.

$W$ is any output-projection weight. $\hat{\mathbf{r}}$ is the unit refusal direction. $\alpha\in[0,2]$ is the surgical depth.

A four-stage pipeline. Each stage feeds the next. We start with raw refusal behavior, dig down to the residual stream, attempt surgery, and reverse-engineer the published "uncensored" forks.

342 prompts · 8 categories. Measure refusal rates on harm, emergency-medical, wilderness, home safety, mental-health, chemistry. Establish the over-refusal baseline.

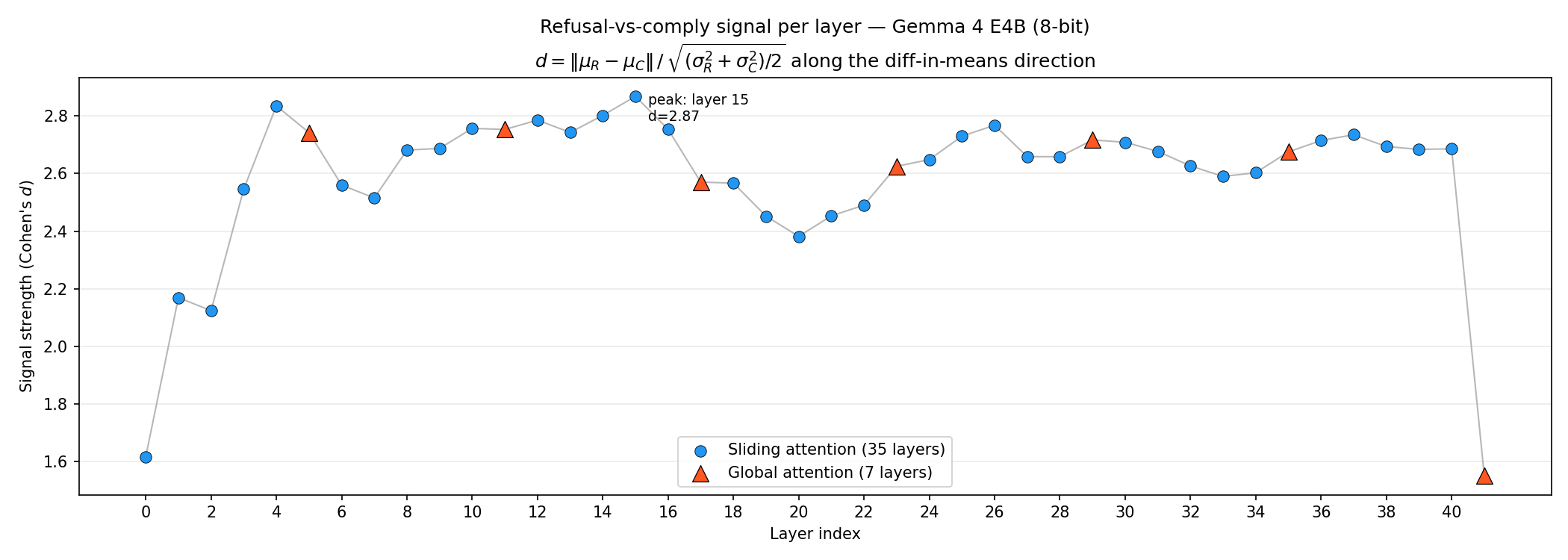

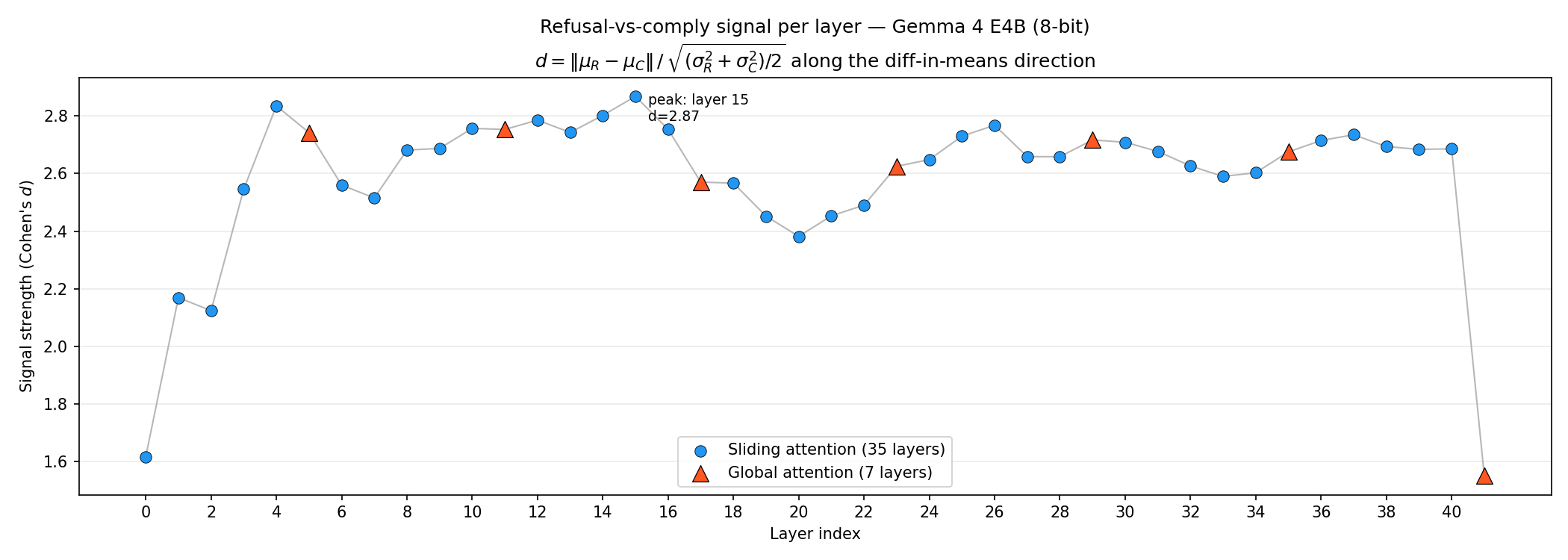

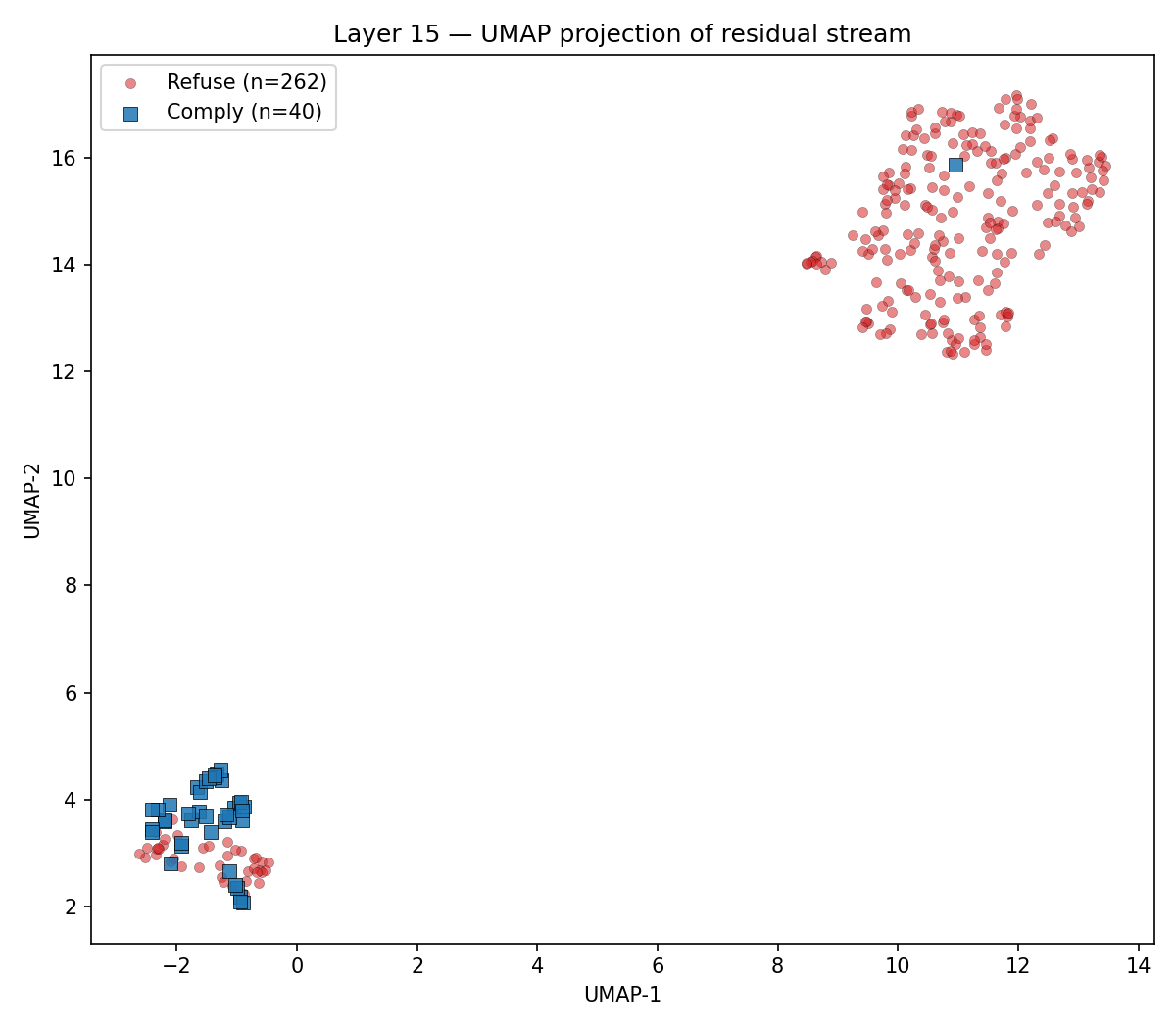

Extract residual-stream activations across 42 layers. Compute Cohen's d, fit UMAP, locate the refusal direction. Peak at L15 · d = 2.87.

Sweep α∈[0, 2], 9 layer subsets, random control. Then a 6-stage causal cascade isolates the load-bearing ingredient.

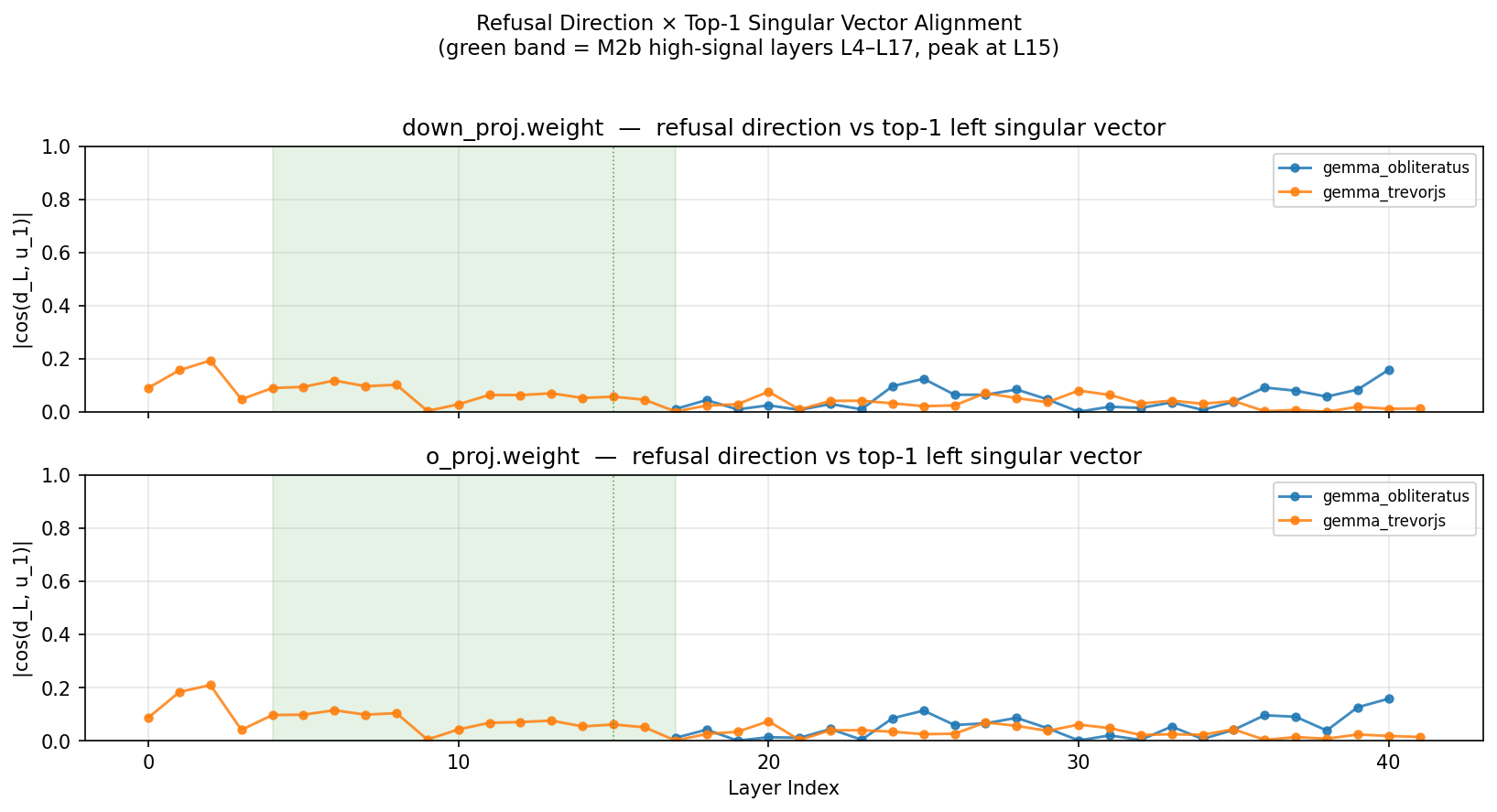

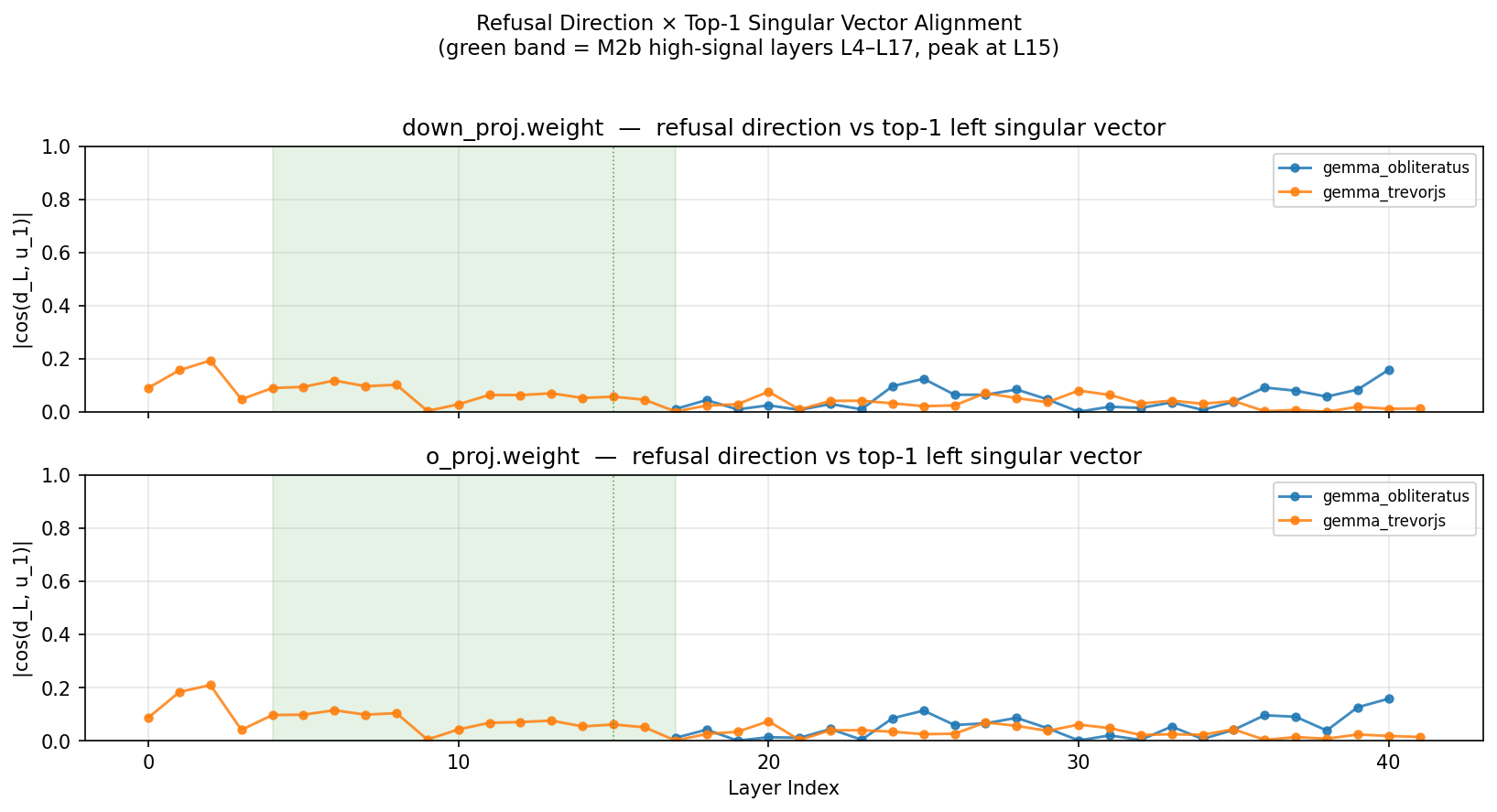

Diff base ↔ OBLITERATUS ↔ TrevorJS. SVD each delta. Compare rank, orientation, and overlap with M2 direction.

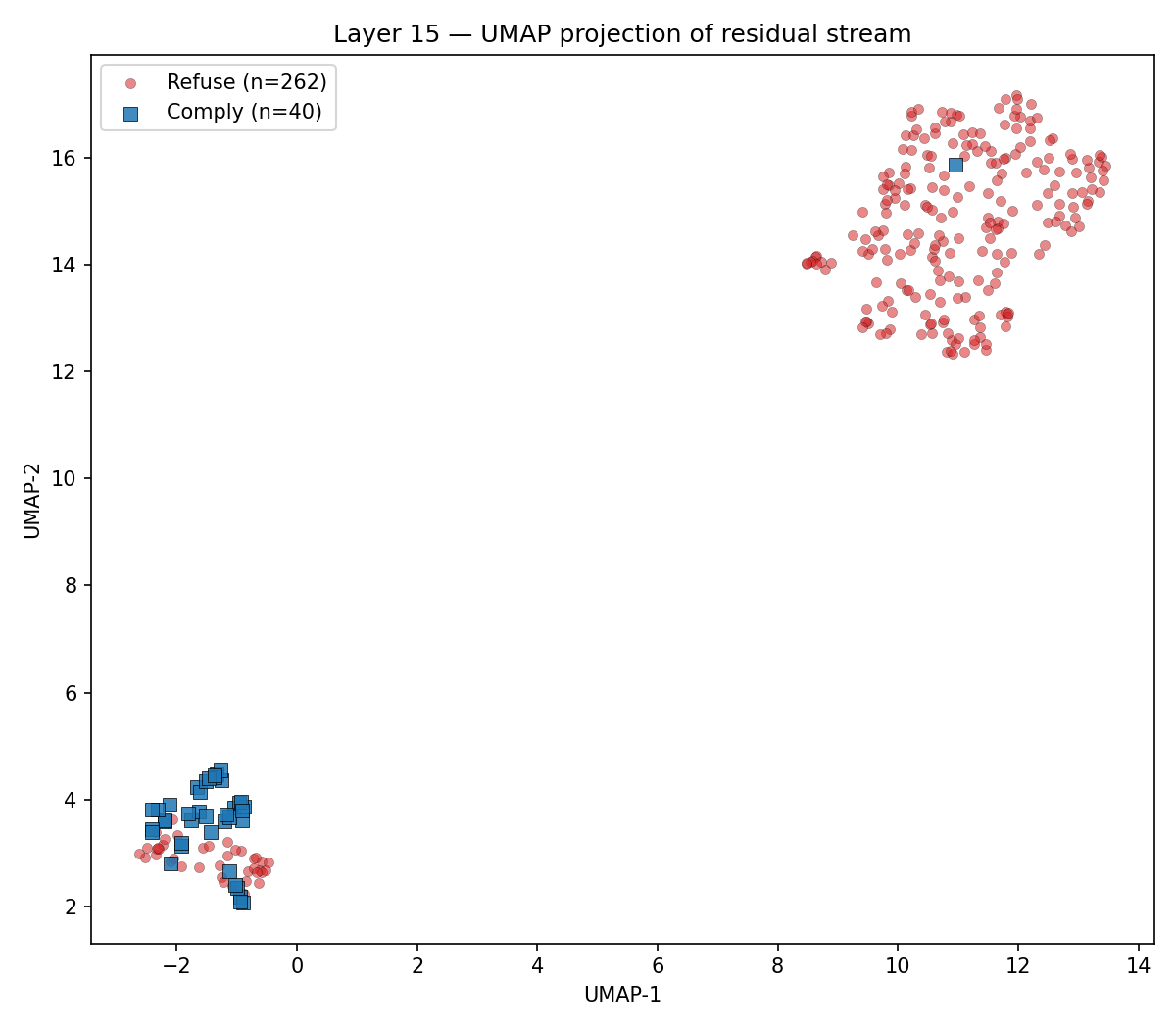

Two views of the same observation: at one specific layer, refuse-class and comply-class prompts separate into two clean clouds in 2D — and that separation has a signature across depth that peaks sharply at L15.

Top-1 PC captures 86.6% of |Δμ|² at the L4–L17 band. Yellow ticks mark global-attention layers; the refusal signal concentrates around them.

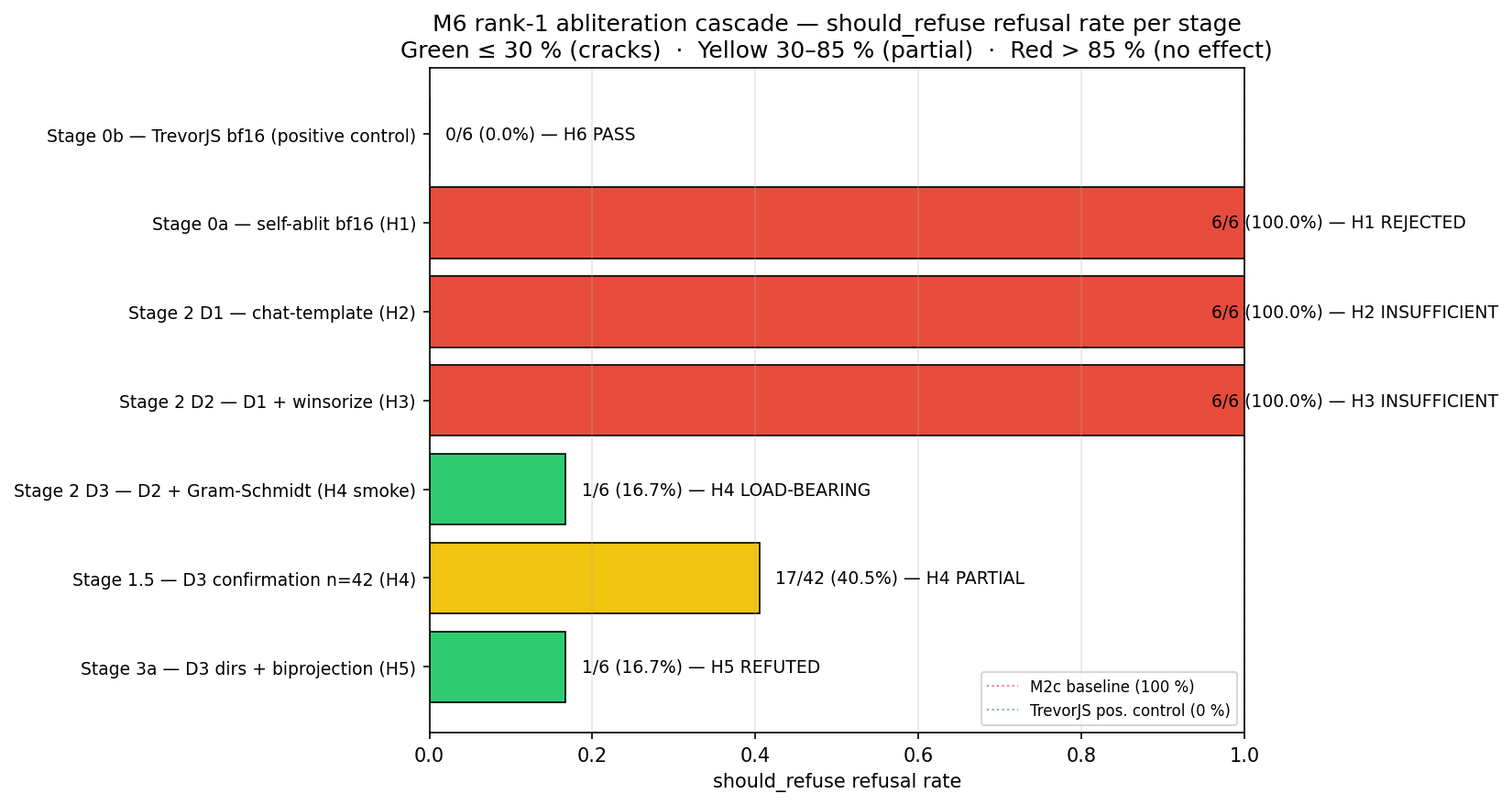

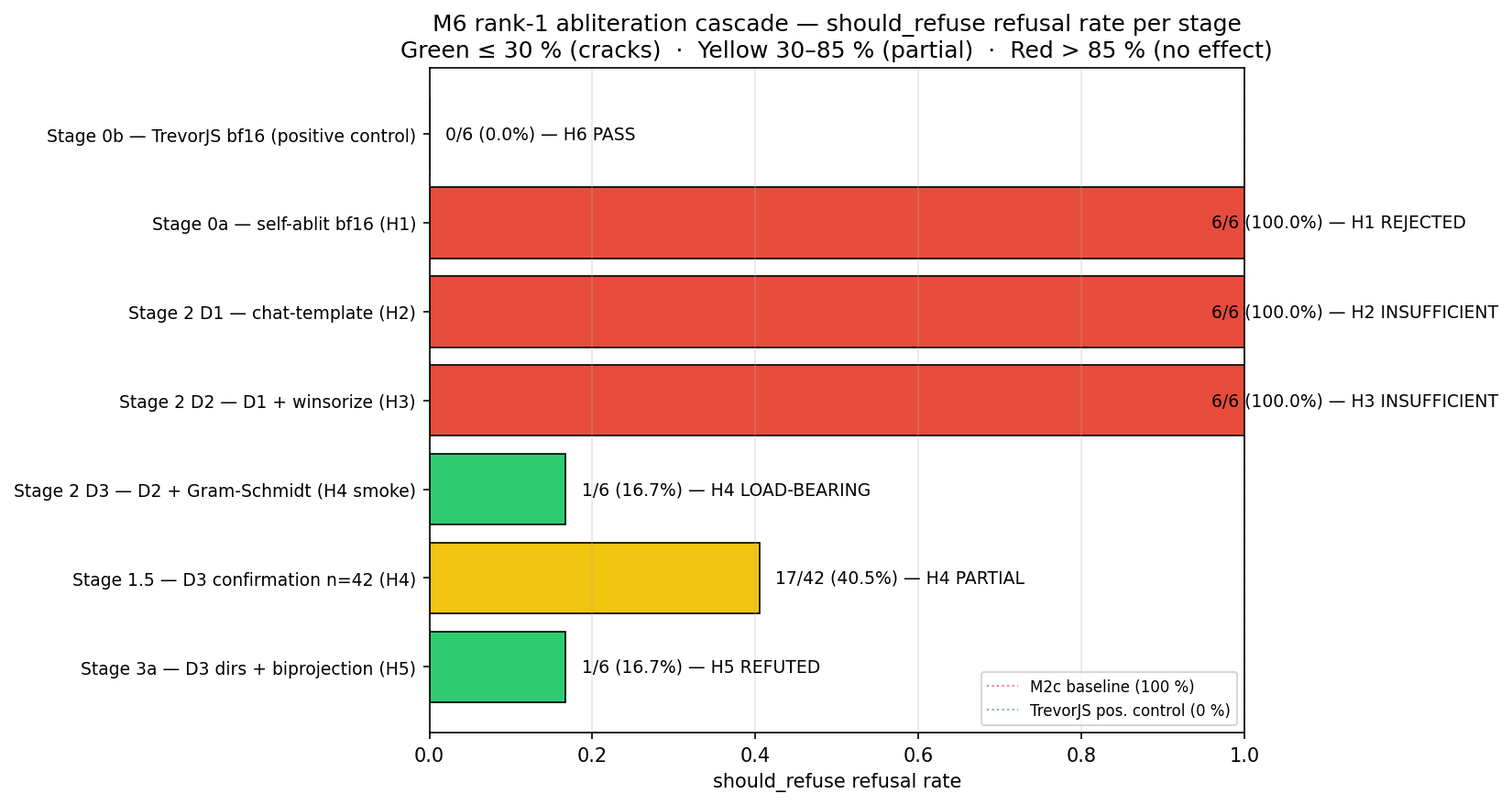

Standard rank-1 abliteration left Gemma 4's refusal rate at 100%. We ran a six-stage causal cascade to find out why. Each stage isolates one variable. Click a node.

On the M6 cascade's n=42 should_refuse subset, with chat-template activations + 99.5% per-layer winsorization + two-pass Gram-Schmidt against the harmless mean, a vanilla rank-1 projection at α=1.0 cuts refusal from 100% to 40.5%. A 60% relative reduction. One ingredient — Gram-Schmidt — is load-bearing.

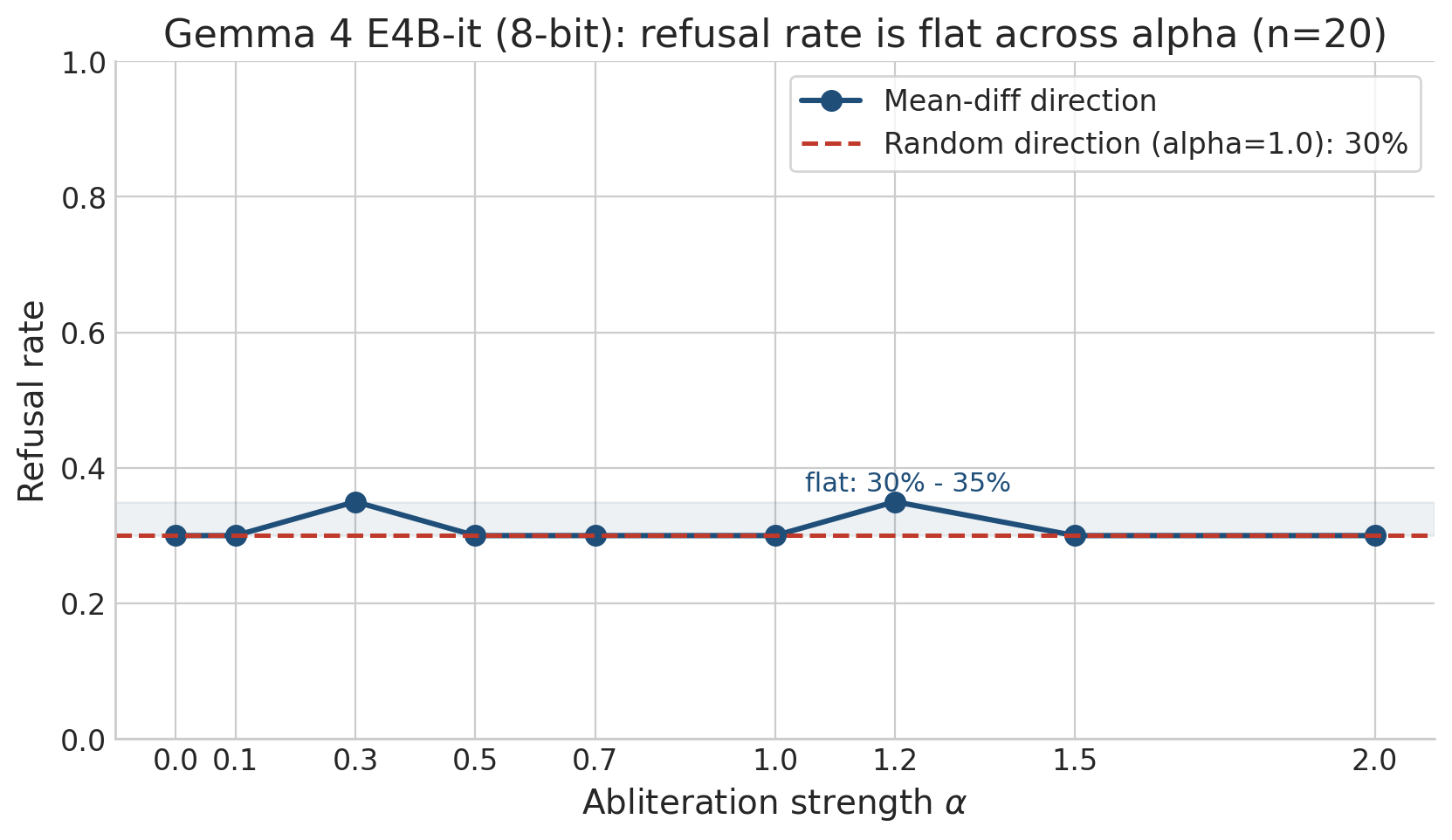

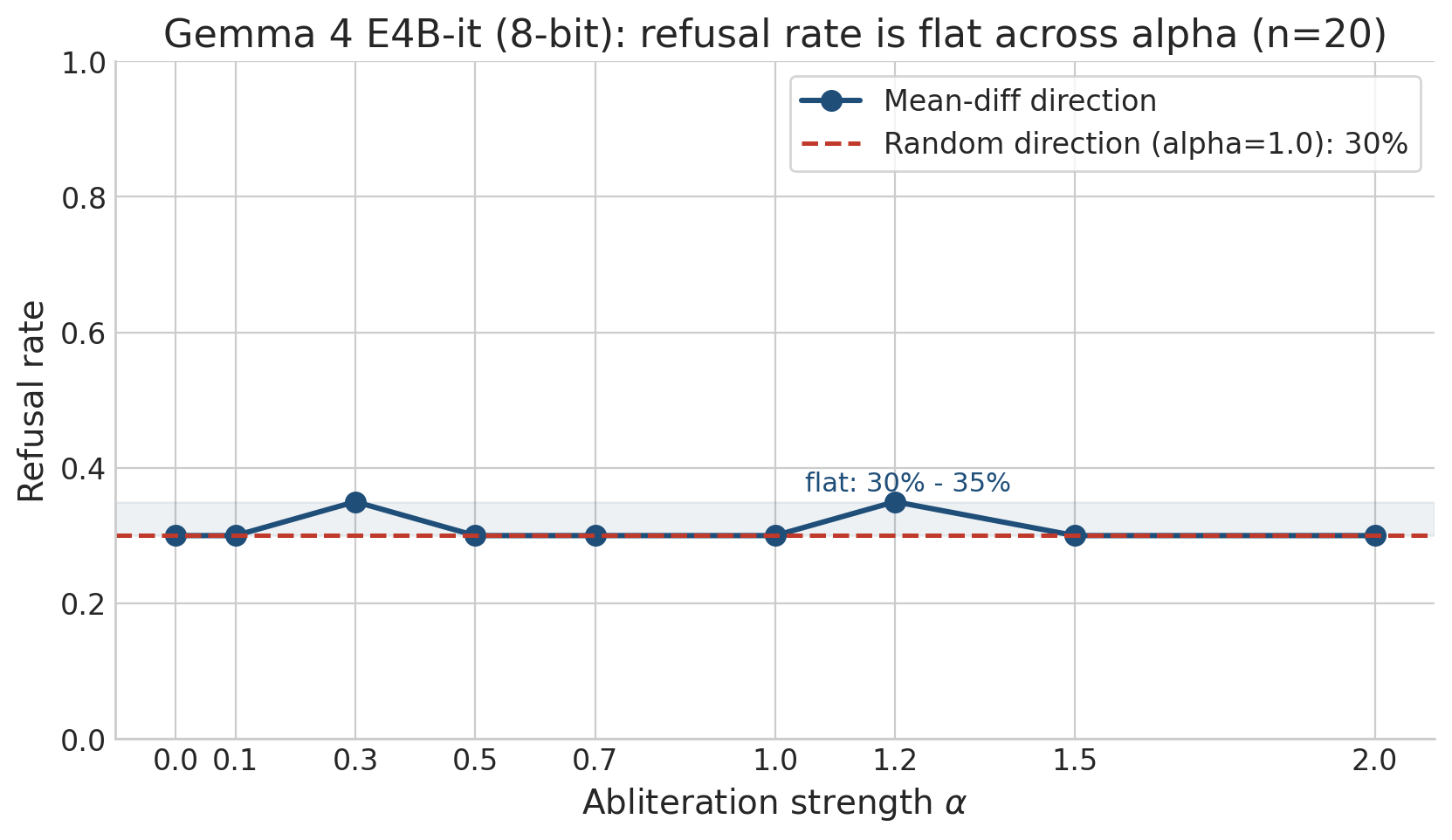

Real sweep_results.json from the M2c study, evaluated on a test subset of n=20 prompts: refusal rate is flat at 30–35% across the entire α sweep. The random-direction control sits at the same baseline. The standard recipe is empirically inert on Gemma 4 E4B-it (8-bit). This is what motivated the M6 cascade above.

The real plots straight from the repo. The interactive demos above are stylized companions; these are the source of truth.

The ~40% residual that survives even our cleanest single-direction surgery concentrates on the most extreme topics — CSAM, ICS/hospital malware, weapons. There is a strong core safety circuit that one direction cannot reach. OBLITERATUS, the publicly successful abliteration, uses median rank-95 of 6 on the same base model.

Per-category refusal directions — emergency medical, wilderness, home safety, chemistry, mental health — form a tight +0.93 pairwise cluster, and are orthogonal to the global should_refuse direction (mean cos ≈ −0.015).

The geometry permits selective de-alignment. The magnitude side blocks it. The next round is multi-rank descent.

team contact · GeometryofAlignment@nyavana.io